AI-Generated Images' Hidden Prejudices: The Importance of Acknowledgement

In the digital age, Artificial Intelligence (AI) has become an integral part of our lives, transforming various aspects from entertainment to law enforcement. However, a pressing concern has arisen regarding the bias in AI image generation, which can have far-reaching consequences.

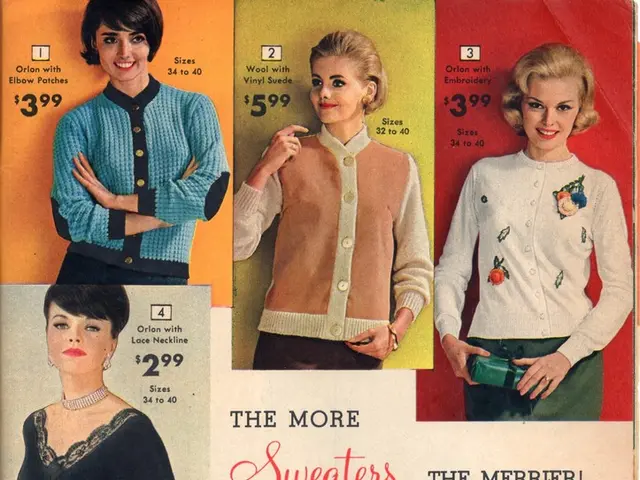

Recent studies have shown that AI models, trained on large datasets scraped from the internet, perpetuate harmful stereotypes. These datasets often overrepresent certain demographics, such as white men in positions of authority, while underrepresenting or stereotyping marginalized groups, like people of colour, women, and disabled individuals. Consequently, AI models tend to default to narrow, stereotypical portrayals, such as associating ugliness disproportionately with old white men or depicting professionals predominantly as white males and service workers with darker skin tones.

The root of this issue lies in the way many AI image generators are trained through unsupervised learning. This method allows AI to analyse and learn patterns from vast datasets without explicit human guidance, but it also means that the AI learns and replicates the biases present in the data.

One of the most alarming consequences of this bias is the reinforcement of harmful stereotypes. AI-generated images can perpetuate and amplify societal biases, affecting how groups are perceived culturally and socially, potentially influencing harmful real-world attitudes and decisions.

To combat this, a multilevel approach is required. Firstly, careful dataset curation is crucial. Developing training datasets that are balanced, representative, and free from harmful stereotypes is essential to reducing bias on the model level.

Secondly, inclusive prompt engineering can help guide AI towards more equitable outputs. Users can craft prompts that explicitly specify identities, contexts, and diverse representation to interrupt default biases.

Thirdly, greater transparency from companies developing AI models is needed. This would allow researchers to scrutinize the training data and identify potential biases.

Furthermore, educational use of AI image generators can foster greater awareness and responsibility around biased outputs. Employing these tools in classrooms to help students critically analyse cultural stereotypes in AI can contribute to a more equitable and inclusive future.

Lastly, ethical AI development paradigms must prioritise fairness, representation, and inclusivity throughout the training, evaluation, and deployment stages of AI.

In the realm of law enforcement, biased AI could lead to wrongful arrests and perpetuate existing inequalities within the justice system. Ensuring diverse representation and minimising the inclusion of harmful stereotypes in training datasets is crucial for addressing bias in AI.

The goal is to develop and utilise AI responsibly, aiming for an equitable and inclusive future. Further reading on AI bias can be found in articles from MIT Technology Review, Science Magazine, and the Partnership on AI.

Technology has the potential to revolutionize graphical representation in the future by enhancing AI. However, recent studies reveal that AI models, trained through unsupervised learning, perpetuate harmful stereotypes, reinforcing biases in AI-generated images that can impact societal perceptions and real-world decisions. To combat this, strengthening dataset curation, adopting inclusive prompt engineering, and promoting transparency from AI developers are crucial steps towards creating a more equitable and unbiased AI future.